Kormushev, P., Calinon, S. and Caldwell, D.G. (2010)

Robot Motor Skill Coordination with EM-based Reinforcement Learning

In Proc. of the IEEE/RSJ Intl Conf. on Intelligent Robots and Systems (IROS), Taipei, Taiwan, pp. 3232-3237.

Abstract

We present an approach allowing a robot to acquire new motor skills by learning the couplings across motor control variables. The demonstrated skill is first encoded in a compact form through a modified version of Dynamic Movement Primitives (DMP) which encapsulates correlation information. Expectation-Maximization based Reinforcement Learning is then used to modulate the mixture of dynamical systems initialized from the user's demonstration. The approach is evaluated on a torque-controlled 7 DOFs Barrett WAM robotic arm. Two skill learning experiments are conducted: a reaching task where the robot needs to adapt the learned movement to avoid an obstacle, and a dynamic pancake-flipping task.

Bibtex reference

@inproceedings{Kormushev10IROS,

author="Kormushev, P. and Calinon, S. and Caldwell, D. G.",

title="Robot Motor Skill Coordination with EM-based Reinforcement Learning",

booktitle="Proc. {IEEE/RSJ} Intl Conf. on Intelligent Robots and Systems ({IROS})",

year="2010",

month="October",

address="Taipei, Taiwan",

pages="3232--3237"

}

Video

The video shows a Barrett WAM 7 DOFs manipulator learning to flip pancakes by reinforcement learning. The motion is encoded in a mixture of basis force fields through an extension of Dynamic Movement Primitives (DMP) that represents the synergies across the different variables through stiffness matrices. An Inverse Dynamics controller with variable stiffness is used for reproduction.

The skill is first demonstrated via kinesthetic teaching, and then refined by Policy learning by Weighting Exploration with the Returns (PoWER) algorithm. After 50 trials, the robot learns that the first part of the task requires a stiff behavior to throw the pancake in the air, while the second part requires the hand to be compliant in order to catch the pancake without having it bounced off the pan.

Video credits: Dr Petar Kormushev, Dr Sylvain Calinon

Source codes

Download

Usage

Unzip the file and run 'demo1' in Matlab.

Reference

- Calinon, S., Sardellitti, I. and Caldwell, D.G. (2010) Learning-based control strategy for safe human-robot interaction exploiting task and robot redundancies. Proc. of the IEEE/RSJ Intl Conf. on Intelligent Robots and Systems (IROS).

Demo 1 - Mixture of correlated mass-spring-damper systems

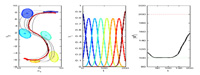

Learning and reproduction of a movement through a mixture of dynamical

systems (similar to Dynamic Movement Primitives), where variability and correlation information along the movement and among the different examples is encapsulated as a full stiffness matrix in a set of mass-spring-damper systems.

For each primitive (or state), learning of the virtual attractor points

and associated stiffness matrices is done through least-squares regression.