EPFL Students Projects Proposals

The projects below are available for either Bachelor/Master semester projects or Master thesis projects (the content will be adjusted accordingly). Suggestions of other projects (or variants of existing projects) are also welcome, as long as they fit within the group's research interests.

Contact: sylvain.calinonidiap.ch

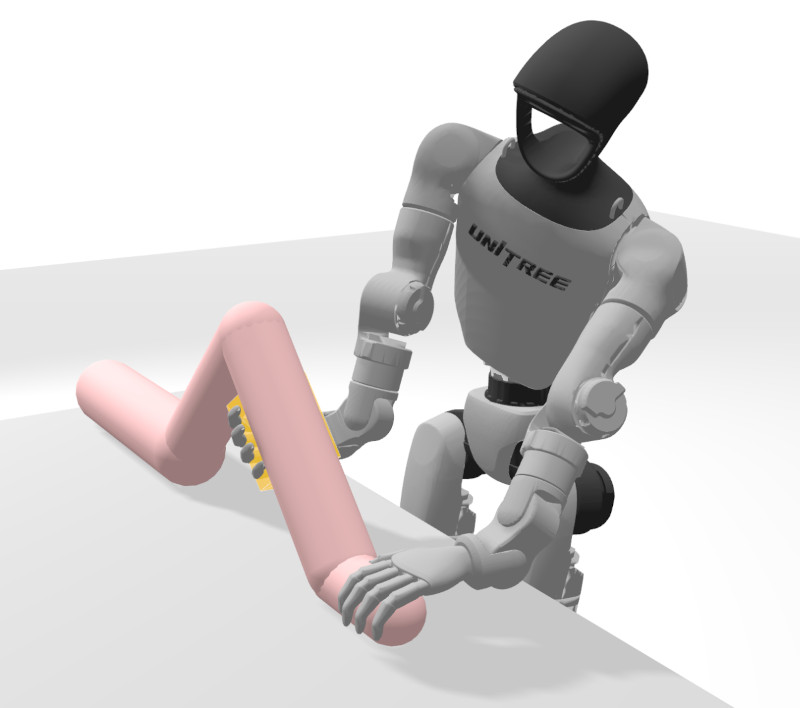

Whole-Body Manipulation with Explicit Self-Balancing Control for Humanoid Robots

This project aims to develop a whole-body control strategy for a humanoid robot that explicitly integrates self-balancing with manipulation.

Goals of the project:

A whole-body control framework will be investigated in which balance and manipulation are treated jointly, rather than relying on a predefined walking or balance controller. The controller will explicitly account for the mass and inertia of the arms and manipulated objects, allowing the robot to adapt its balance while lifting, carrying, or manipulating boxes. Momentum- or optimization-based control formulations will be explored to regulate center-of-mass motion, contact forces, and end-effector tasks simultaneously. The project will be validated on the humanoid platform Unitree G1, with part of the work conducted on-site at Swiss Cobotics Competence Center (S3C) in Biel.

Prerequisites: Linear algebra, rigid-body dynamics, programming in Python or C++, basic knowledge of robot control

References:

Calinon, S. (2024). A Math Cookbook for Robot Manipulation.

Robotics codes from scratch (RCFS)

Level: Master (semester project or PDM)

Contact: sylvain.calinonidiap.ch